How AI, identity abuse, and multi-channel deception are reshaping phishing, scams, and security awareness

Phishing is no longer the sloppy, typo-ridden nuisance it once was. It has matured into a polished, adaptive form of digital deception—fueled by generative AI, scaled across multiple channels, and increasingly designed to exploit trust rather than merely steal credentials. During the 2025 holiday season, Hoxhunt observed a 14x surge in AI-generated phishing attacks that bypassed email filters, with AI-assisted lures rising from 4% to 56% of reported attacks in December before easing in January. The message is unmistakable: fraud has become more persuasive, more contextual, and far more difficult to spot with legacy awareness habits alone. Source

What makes this shift especially dangerous is not just the quality of the lure, but the breadth of the battlefield. Modern social engineering now moves fluidly across email, text, voice calls, QR codes, and workplace collaboration tools. Hoxhunt reports that around 40% of phishing campaigns now extend beyond email, while QR phishing, or “quishing,” rose 25% year over year. CrowdStrike adds another warning: vishing surged 442% between H1 and H2 2024, showing how quickly threat actors are weaponizing voice-based deception and callback fraud. Source Source

The result is a new kind of risk environment—one where digital fraud is less about one malicious email and more about a coordinated campaign to impersonate, pressure, and manipulate across the channels people trust most.

Why Today’s Phishing Feels More Convincing

Generative AI has elevated the craft of deception

The old red flags are disappearing. AI-generated phishing content is cleaner, more natural, and far better at mimicking familiar tone, brand language, and conversational context. Instead of generic scare tactics, attackers now produce messages that feel routine: a shared file, an invoice request, a Teams prompt, a callback notice, a payroll update, or a message that appears to come from a known contact. Hoxhunt notes that phishing campaigns are increasingly using polished lures, malicious calendar invites, callback scams, recruitment fraud, and SVG attachments—evidence that attackers are designing around human workflow, not just technical weakness. Source

This helps explain why the human element remains central to breach risk. Verizon found that a human element was involved in 68% of breaches, and that the median time for users to fall for phishing is less than 60 seconds. In other words, the modern phishing problem is not merely one of awareness. It is one of reaction speed, trust calibration, and decision-making under pressure. Source

From Credential Theft to Business Disruption

The real targets are identities, access, and financial control

The goal of many phishing campaigns today is not simply inbox compromise. It is the theft of authenticated access—especially cloud identities and business privileges. Rather than relying on a less-supported claim that “80% of phishing campaigns target cloud logins,” the stronger evidence points to a broader identity trend: CrowdStrike reported that valid account abuse was the primary initial access method in 35% of cloud incidents in H1 2024. That makes identity compromise one of the most important entry paths in modern attacks. Source

The financial consequences are equally sobering. The FBI’s IC3 recorded 21,442 Business Email Compromise complaints and $2.77 billion in losses in 2024 alone. These are not fringe incidents. They are highly effective fraud operations that exploit vendor trust, executive authority, and familiar business processes to trigger unauthorized payments and data exposure. Source

Ransomware also remains deeply intertwined with the broader identity and intrusion landscape. Rather than making a weaker claim that a specific percentage of ransomware attacks begin with phishing, a better-supported formulation comes from Verizon: ransomware and extortion accounted for 32% of breaches, and ransomware remained a top threat across 92% of industries. That framing is more defensible—and more useful for executives assessing real enterprise exposure. Source

How AiTM Changes the MFA Story

Attackers are no longer just stealing passwords—they are stealing sessions

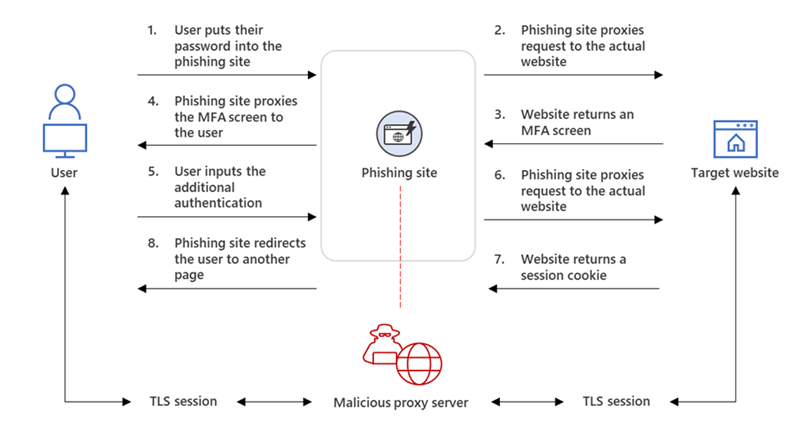

One of the most important developments in phishing is the rise of Adversary-in-the-Middle (AiTM) attacks. These attacks do not “crack” MFA in the traditional sense. Instead, they place a malicious reverse proxy between the victim and the legitimate sign-in page, allowing the attacker to capture the artifacts of an already authenticated session. As Microsoft explains, once the user logs in and completes MFA, the attacker can steal the session cookie and use it to impersonate the user without re-authenticating. Source

In practical terms, AiTM attacks may capture session cookies, and in some environments may also expose related session artifacts such as access or refresh tokens, depending on the authentication flow and application architecture. The central security problem is the same: once the attacker has possession of a valid authenticated session, traditional MFA prompts have already been satisfied. Source

The scale of this technique has grown dramatically through phishing-as-a-service. Rather than using a weaker, unverified claim about a fixed number of Microsoft users affected monthly, the stronger source-backed statement comes from Microsoft’s Tycoon2FA analysis: Tycoon2FA helped enable campaigns responsible for tens of millions of phishing messages reaching more than 500,000 organizations each month worldwide. That is not just a threat trend; it is an industrialized ecosystem. Source

Why FIDO Passkeys Matter More Than Ever

The answer is not more prompts—it is phishing-resistant authentication

If AiTM steals authenticated sessions, then the strongest defensive move is to reduce the attacker’s ability to proxy the login in the first place. CISA states that the only widely available phishing-resistant authentication is FIDO/WebAuthn, while the FIDO Alliance explains that passkeys rely on asymmetric cryptography and domain-bound authentication. In simple terms, the sign-in is tied to the legitimate website, which makes proxy replay far more difficult than with phishable factors such as SMS, OTPs, or prompt fatigue. Source Source

That does not make every implementation invulnerable. Malware on an endpoint can still create risk, especially with software-based authenticators or compromised devices. But hardware-backed security keys and properly implemented passkeys materially raise the bar, particularly for administrators, finance teams, executives, and help desk staff who are frequent social-engineering targets. Source Source

The Rise of Voice Fraud

AI cloning has made “sounding real” a serious security problem

Voice-based fraud is moving from novelty to operational threat. Attackers are increasingly using phone calls, voicemails, and callback prompts to impersonate colleagues, executives, vendors, or family members. While some articles cite precise minimum audio lengths for voice cloning, the stronger and safer wording is this: very short audio samples may be sufficient for convincing AI-generated voice impersonation, and that is now enough to support real-world fraud and vishing attempts. FTC has warned explicitly about the harms of AI-enabled voice cloning, while vendors such as Pindrop and TruthScan position their tools around synthetic audio detection and enterprise voice authentication. Source Source Source

How do detectors distinguish voice cloning from broader deepfakes? In general, audio-clone detection focuses on acoustic anomalies, compression artifacts, cadence irregularities, and other synthetic fingerprints in speech. Video deepfake detection adds a different layer: lip-sync consistency, facial artifacts, lighting mismatches, and frame-level manipulation. The defensive lesson is simple: hearing a familiar voice is no longer a reliable signal of authenticity. Source Source

How to Implement DMARC Without Breaking Email

The smartest rollout is gradual, disciplined, and report-driven

Email authentication remains one of the highest-leverage controls for reducing spoofing and protecting brand trust. The core building blocks work together, but they serve different purposes. SPF verifies whether a sending server is authorized to send mail for a domain. DKIM adds a cryptographic signature so receiving servers can verify message integrity and sender authenticity. DMARC sits above both, requiring alignment with the visible “From” domain and telling receiving systems what to do when authentication fails. Google Workspace Admin Help DMARC.org

Google recommends a phased approach. Start with p=none to collect aggregate reports, identify all legitimate senders, correct SPF and DKIM gaps, and then move gradually toward quarantine and finally reject, using the pct tag to control rollout risk. DMARC.org also emphasizes keeping SPF records simple, maintaining identifier alignment, and rotating DKIM keys regularly. Source Source

A safe starting example is:

v=DMARC1; p=none; rua=mailto:dmarc-reports@yourdomain.com; aspf=r; adkim=r

A stricter mature example, aligned with Google’s documentation, is:

v=DMARC1; p=reject; rua=mailto:postmaster@example.com,mailto:dmarc@example.com; pct=100; adkim=s; aspf=s Source

The key point is not speed. It is visibility. Organizations that rush to enforcement without auditing third-party senders often break legitimate mail. Organizations that monitor carefully and enforce progressively build lasting protection.

Why Awareness Training Must Shift From Compliance to Behavior

The best programs change reflexes, not just quiz scores

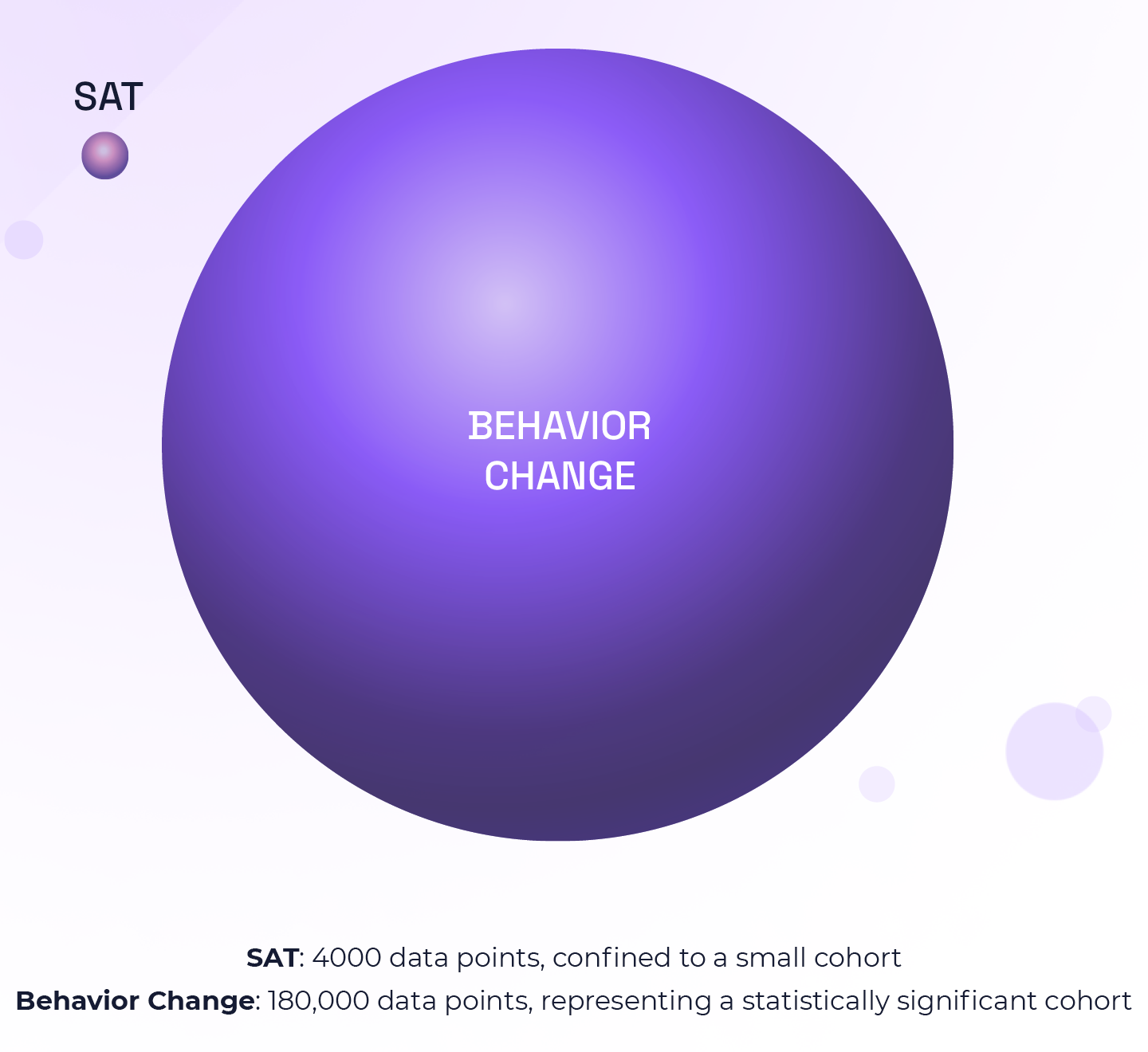

Traditional awareness programs often teach employees to look for urgency, spelling mistakes, and obvious red flags. But AI-generated phishing increasingly removes those clues. That is why static, quarterly compliance training is no longer enough. Hoxhunt reports that behavior-based security programs produced a 6x improvement in reporting social engineering attacks within six months and an 87% reduction in malicious clicks. Source

That is a profound shift. The purpose of modern awareness training is not to help employees pass a module. It is to help them pause, verify, escalate, and use out-of-band confirmation when faced with routine-looking requests that exploit speed and familiarity. The strongest programs teach what fraud really looks like now: calendar invites, Teams impersonation, supplier invoice changes, HR lures, callback prompts, QR codes, and executive pressure tactics. Source

How AI Helps Detect Phishing—and Where It Still Falls Short

Defenders are using machine learning too, but governance matters

AI is not only helping attackers. It is also strengthening defense. Google says machine-learning-based detection contributes to 99.9% spam detection accuracy, while Safe Browsing protections warn users about dangerous links across Gmail and billions of browsers. Microsoft adds that modern phishing detection must look beyond URL reputation alone and instead rely on campaign-level signals, sender behavior, message content, and anomalous authentication patterns. That matters because increasingly sophisticated campaigns abuse legitimate platforms, trusted domains, and redirect chains to blend in with normal traffic. Source Source

But AI-driven detection is not a silver bullet. Highly adaptive phishing can still evade static models, especially when each lure is unique. And as organizations analyze more email, voice, and behavioral data, privacy and governance risks increase as well. The European Data Protection Board has stressed that AI systems processing personal data must still comply with GDPR principles. Meanwhile, the EU AI Act treats certain biometric inferences as highly sensitive. Source Source

What the EU AI Act Means for Inference Risk

Not every inference is prohibited—but biometric emotion inference is tightly constrained

The EU AI Act does not provide a general standalone definition of “inferences,” but it does regulate the concept through specific system categories. Under Article 3, an emotion recognition system is one that identifies or infers emotions or intentions based on biometric data. Article 5 prohibits the use of AI systems to infer emotions of natural persons in workplaces and educational institutions, except for medical or safety reasons. Source Source

The penalties are significant. Article 99 allows fines of up to €35 million or 7% of global annual turnover, whichever is higher, for prohibited AI practices. For SMEs and startups, the lower applicable amount or percentage applies. For organizations using AI to analyze communications, biometrics, or behavioral signals, this is more than a compliance footnote. It is a governance imperative. Source

Conclusion

The future of phishing defense belongs to organizations that treat trust as infrastructure

The old cybersecurity narrative cast phishing as a user-awareness problem. That is no longer sufficient. Today’s fraud landscape is a trust-manipulation problem powered by AI, scaled through identity abuse, and amplified across every channel where people work, communicate, and make quick decisions.

The strongest response is not one tool or one training program. It is a layered strategy: phishing-resistant MFA, disciplined email authentication, rapid session revocation, multi-channel fraud playbooks, behavior-based training, real-time detection, and governance for AI-enabled analysis. The organizations that will navigate this era best are the ones that stop treating phishing as a nuisance at the inbox edge—and start treating it as a business-wide attack on identity, workflow, and confidence.

One-Page Executive Summary

Priority actions to reduce phishing, fraud, and digital impersonation risk

Top Risks

- AI-generated phishing is surging, with Hoxhunt reporting a 14x increase in AI-generated phishing during the 2025 holiday season and a peak of 56% of reported attacks in December. Source

- Multi-channel social engineering is expanding, with 40% of phishing campaigns extending beyond email and vishing up 442% between H1 and H2 2024. Source Source

- Identity abuse is a primary intrusion path, with valid account abuse responsible for 35% of cloud incidents in H1 2024. Source

- BEC remains highly costly, with $2.77 billion in U.S. losses reported in 2024. Source

- Ransomware/extortion accounted for 32% of breaches and remained a top threat across 92% of industries. Source

Most Effective Mitigations

- Move high-risk users to phishing-resistant MFA such as FIDO2 security keys or passkeys. Source Source

- Revoke sessions aggressively after suspicious sign-ins and shorten session lifetimes for sensitive roles. Source

- Deploy SPF, DKIM, and DMARC in phases, starting with monitoring and progressing to enforcement after sender inventory and alignment review. Source Source

- Modernize awareness training from compliance modules to behavior-based simulations focused on reporting, verification, and out-of-band checks. Source

- Expand defenses beyond email to include vishing, callback scams, QR-code phishing, and collaboration-platform impersonation. Source Source

- Use AI-assisted detection with human oversight, focusing on sender behavior, message context, anomalous sign-ins, and campaign-level signals—not URL reputation alone. Source Source

30–90 Day Action Plan

Next 30 days

- Identify privileged, finance, and help-desk users for passkey/FIDO2 rollout.

- Inventory all email senders and publish or review SPF, DKIM, and DMARC records.

- Update incident playbooks to include vishing, callback fraud, and QR phishing.

Next 60 days

- Move DMARC to monitored enforcement stages after sender remediation.

- Run targeted AiTM, BEC, and voice-fraud simulations.

- Enable stronger link protection, click-time scanning, and session monitoring.

Next 90 days

- Measure reporting speed, malicious-click reduction, and identity-risk metrics.

- Extend phishing-resistant MFA to broader employee groups.

- Review AI-analysis privacy controls and regulatory exposure under GDPR and the EU AI Act.

Executive Bottom Line

The most resilient organizations will be the ones that treat trust as a control surface. That means securing identities, authenticating communications, training for behavior under pressure, and detecting deception wherever it appears—not just in the inbox.

Five Corresponding Image Concepts

Ready to generate after confirmation

I can create these as a matching editorial image set:

- AI Phishing Surge — a high-end editorial illustration of a corporate inbox flooded by polished AI-generated phishing emails, with brand impersonation, calendar invites, QR codes, and mobile alerts.

- AiTM Session Hijack — a clean cybersecurity infographic showing user → malicious proxy → legitimate sign-in → stolen authenticated session.

- Voice Clone Vishing Threat — an executive receiving a convincing fraudulent voice call, with waveform overlays and trust-vs-deception visual contrast.

- DMARC / SPF / DKIM Defense Stack — a modern security architecture visual showing authenticated email flowing through SPF, DKIM, and DMARC enforcement layers.

- Behavior-Based Security Training — employees in a modern office detecting phishing across email, chat, QR code, and phone channels, emphasizing awareness as active defense.